1 – How we got here

When mission-critical applications were all implemented within your data center, capacity management seemed easy – Most organizations deployed infrastructure sets (compute, network, and storage) to meet their 95 to 99% capacity requirement. For a retailer, that might mean planning around a “Black Friday” date (Cyber-Monday wasn’t around then). For a bank or a manufacturer using ERP or financial systems, this might instead be the end of year close. I’m sure you can think of a date for your own organization’s highwater mark for resource requirements. The results of this approach were massive overcapacity and high expense levels – but it worked “back in the day.”

Keep in mind that this was when loadings were lower, mobile wasn’t on the scene, and at first, these were physical only systems.

Next up was virtualized infrastructure, overwhelmingly on VMware for the compute layer, with separate dedicated physical servers and enterprise storage environments to support transaction processing needs. Network devices started to support application metering to ensure that mission-critical applications had the bandwidth they needed to meet SLAs in that same worst-case (Black Friday) scenario. This model allowed for more flexibility, with the option of expanding infrastructure within the limits of on-premises or hosted environments – still within the bounds of ‘owned’ infrastructure but allowing for better cost-efficiency. Capacity management here often meant that you had enough spare compute, network, and storage that you could repurpose to meet a relatively predictable top-end load.

When the cloud came on the scene, we often called these virtualized environments “private clouds.”

2 – Where we are today

Now, we’re well past the initial adoption era for cloud – and the vast majority of mission-critical applications are hybrid, with a mix of public cloud and backend data center or hosted resources. Data centers disproportionally have critical storage environments or legacy backend systems to enable organizations to maximize control of storage access speeds and transactions. In many cases, application infrastructure has spread to wherever an organization’s journey through digital transformation has taken them.

- Single virtualization environments have morphed to multiple environments with two or more hypervisors.

- Compute resources have a presence on-site, but the vast majority now also include a permanent component in single or multiple vendor public cloud environments to allow scaling of that layer as needed.

- Networks now have multiple implementations – physical and software-defined on-premises and software-defined implementations in cloud environments.

But that’s not all that’s changed. Add in the exponential increase in demand for access to services, and it’s a new world of clients that can consume mission-critical application resources:

- Traditional PCs in the hundreds of millions

- Billions of mobile devices across the globe

- A tsunami of IoT devices that have only starting to build

Storage needs are also growing massively, even as the demand for responsive user experiences increases. And peaks are no longer just the “Black Friday” scenario – peak scaling is still periodic, but with less predictable, more frequent peak loads. Intelligent workload optimization and scaling within internal private clouds have become critical, enabling the right local resources can be available at the right time. What’s more, with public cloud-based infrastructure (even public cloud systems deployed in your data center), cost now enters the picture more than ever with highly variable costs that can be hard to predict for cloud infrastructure. Physical backend storage and systems must balance with highly scalable cloud resource sets – and decisions about reserved capacity, scaling, instance types, locations, and networking are critical to ensure uptime and meet SLAs.

3 – What do these changes mean for capacity management?

What do all of these changes mean for capacity management? If you are supporting one of these mission-critical applications, it’s a sea change for applications views, monitoring, and management for capacity:

- Context – application and location-centric views of infrastructure: Application-centric topologies are required, combined with detailed relationship data about infrastructure elements, their locations, capacities, and status. Context has to include both the application, detailed cloud/virtual/physical environments and any other uses of resources upon which the application is dependent.

- Continuous discovery of applications and infrastructure – with capacity analysis and thresholds automatically applied: With auto-scaling now available with compute, network, and storage layers, continuous discovery can’t be optional. What’s needed is continuous application level and infrastructure element discovery – with best-practices capacity rules (or your organization’s equivalent) across the local and cloud environments underlying your hybrid application.

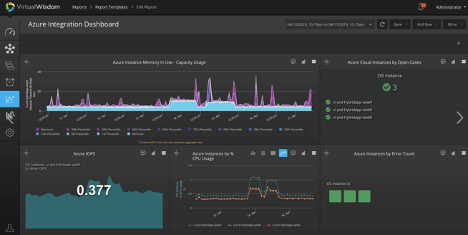

- Siloed tools won’t do: The tools from any single infrastructure element offering, or cloud vendor’s environment are insufficient for the task. Siloed solutions that cover limited infrastructure elements can’t tell you enough to prevent unplanned downtime and keep performance SLAs.

- Balancing performance, availability, and cost are more important than ever: Having a mission-critical application doesn’t mean that you have a Manhattan Project budget for managing it. Good decisions are required about the allocation of limited resources on-site and minimizing the costs of bursting and scaling to cloud resource sets. The alternative is more over-provisioning and overcapacity combined with large and unpredictable cloud service bills.

- Long-term data sets with granular data: Tools that capture only snapshots of short data baselines for hours, days, or even weeks aren’t enough. Long term data is required. And that data needs to be at the most granular level available – collecting data from infrastructure elements and minimizing the losses of insight caused by averaging data points. Some problems can’t be avoided (or found when they occur) without the most detailed sets of long-term data.

- AIOps – Powered by AI, machine learning, and statistical modeling and algorithmic correlation tools in addition to traditional trending and baselining: And making sense of all this data, all of these environments, and all of these capabilities requires intelligence that isn’t available from yesterday’s tools. Given the complexity of managing even the more straightforward mission-critical applications of the past, working within today’s highly-scalable, highly variable environments requires intelligence that is applied to every aspect of capacity management from cost to resource allocation to availability and more.

- Full integration between application-level tools, infrastructure management, monitoring, ITSM (or DevOps) resources, and cloud environment tools: It’s not enough for even a high level of infrastructure capacity management to operate as a standalone tool – integration with application-level management tools like AppDynamics or DynaTrace along with ITSM resources such as ServiceNow (or DevOps operations) are hard requirements. And hybrid infrastructure management and monitoring without a view into the cloud resource stack, services, performance, and cost isn’t a solution that will work.

No one else puts the full picture for hybrid applications and infrastructure together like Virtana’s VirtualWisdom solution. If you’d like to learn more about Virtana solutions, consider one of our free trials – or take a quick look at Virtana today with this quick video from our CEO, Philippe Vincent.

James Bettencourt