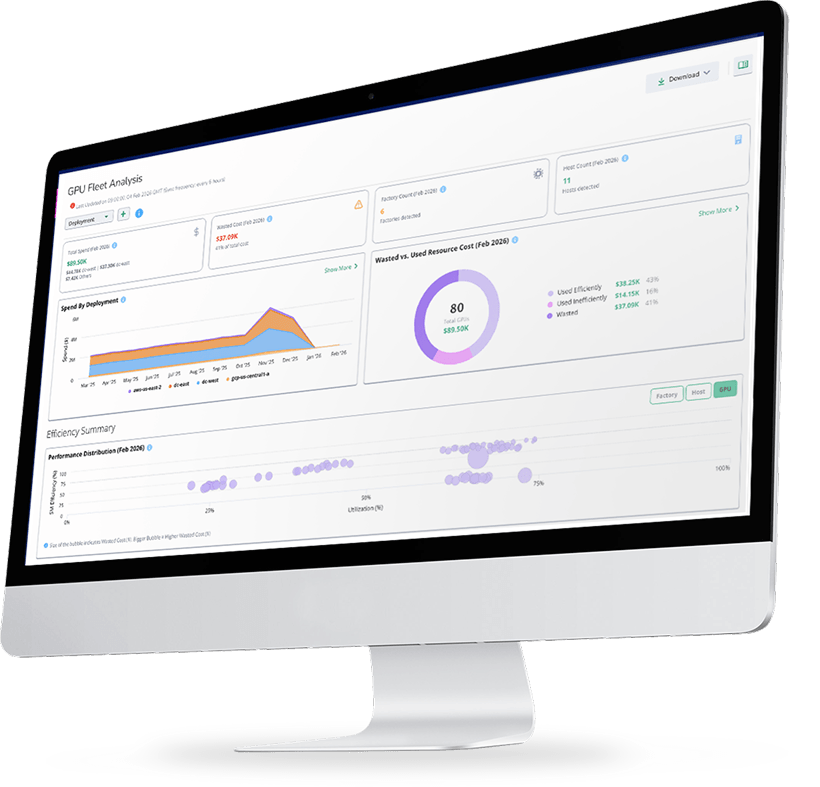

Virtana redefines AI Factory Observability by treating the GPU cluster, data fabric, and model pipeline as a single, high-performance system.

When training jobs stall or inference latency spikes, teams stop guessing at hardware bottlenecks and start proving exactly where the constraint lives—from Infiniband congestion to fragmented GPU memory—before costs spiral and schedules slip.

Full-Stack AI Observability

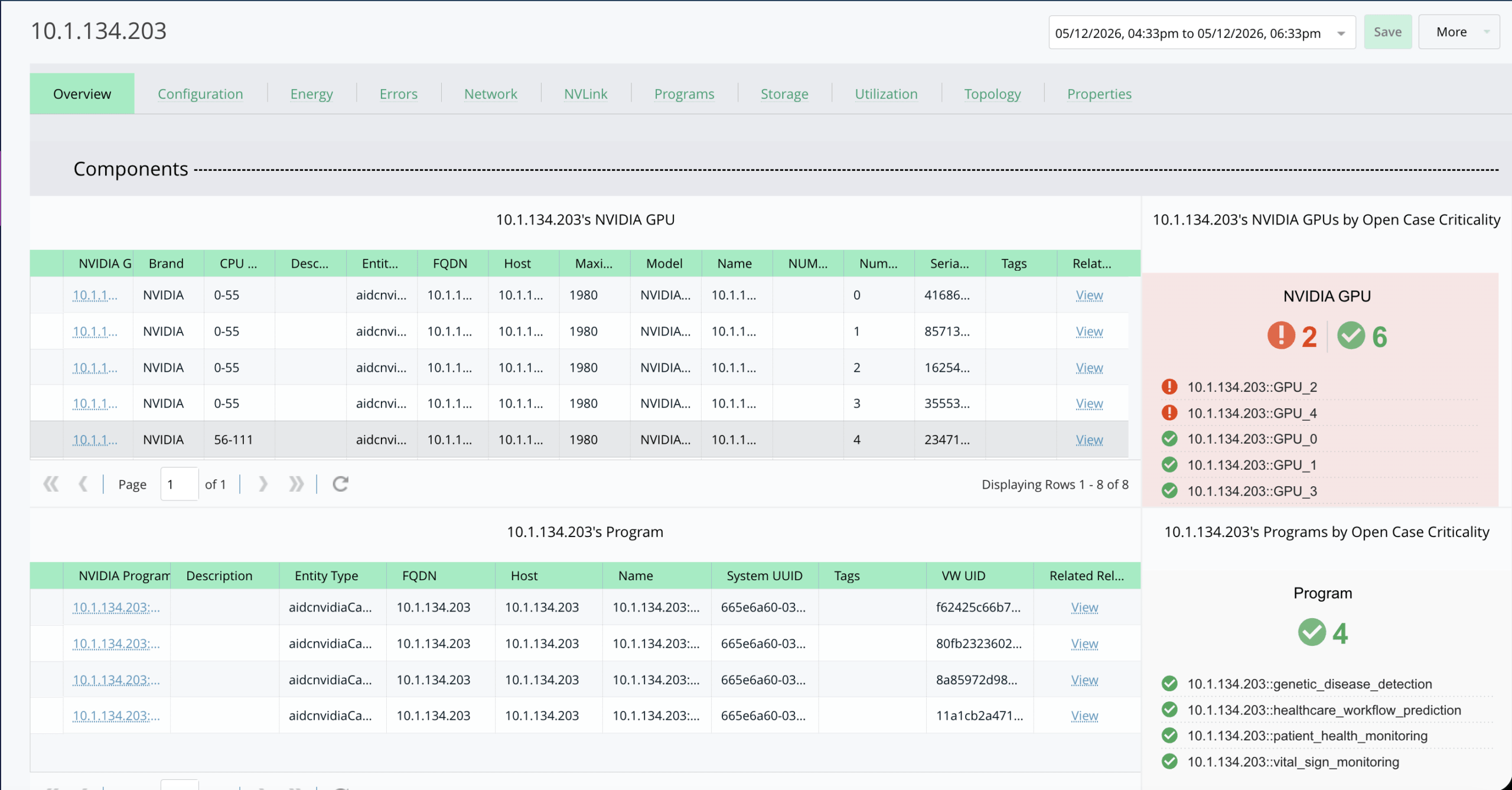

See The Full AI Factory

- Application-to-infrastructure mapping.

- GPU, memory, and workload utilization insights

- Cross-stack correlation for faster root-cause analysis.

GPU Monitoring Software

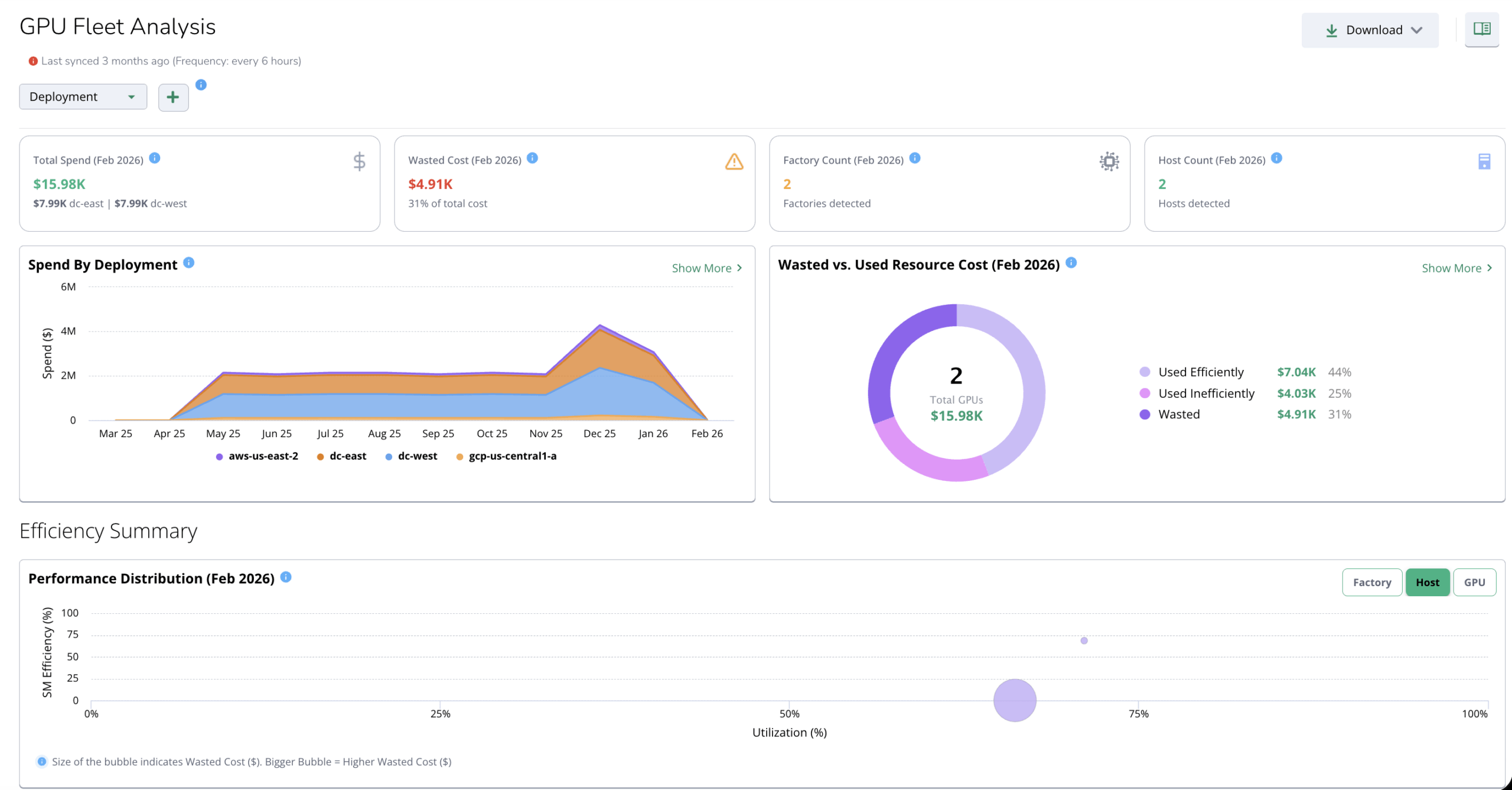

GPU Performance & Cost Optimization

- Detect idle and wasted GPU capacity

- Identify throttling, contention, and misallocation.

- Optimize utilization to reduce infrastructure spend with deeper GPU visibility.

GPU Infrastructure

Power & Sustainability Intelligence

- Monitor power consumption, thermals, and efficiency.

- Identify energy waste from idle resources

- Support sustainable AI operations at scale with system-aware AI oversight.

AI Data Fabric

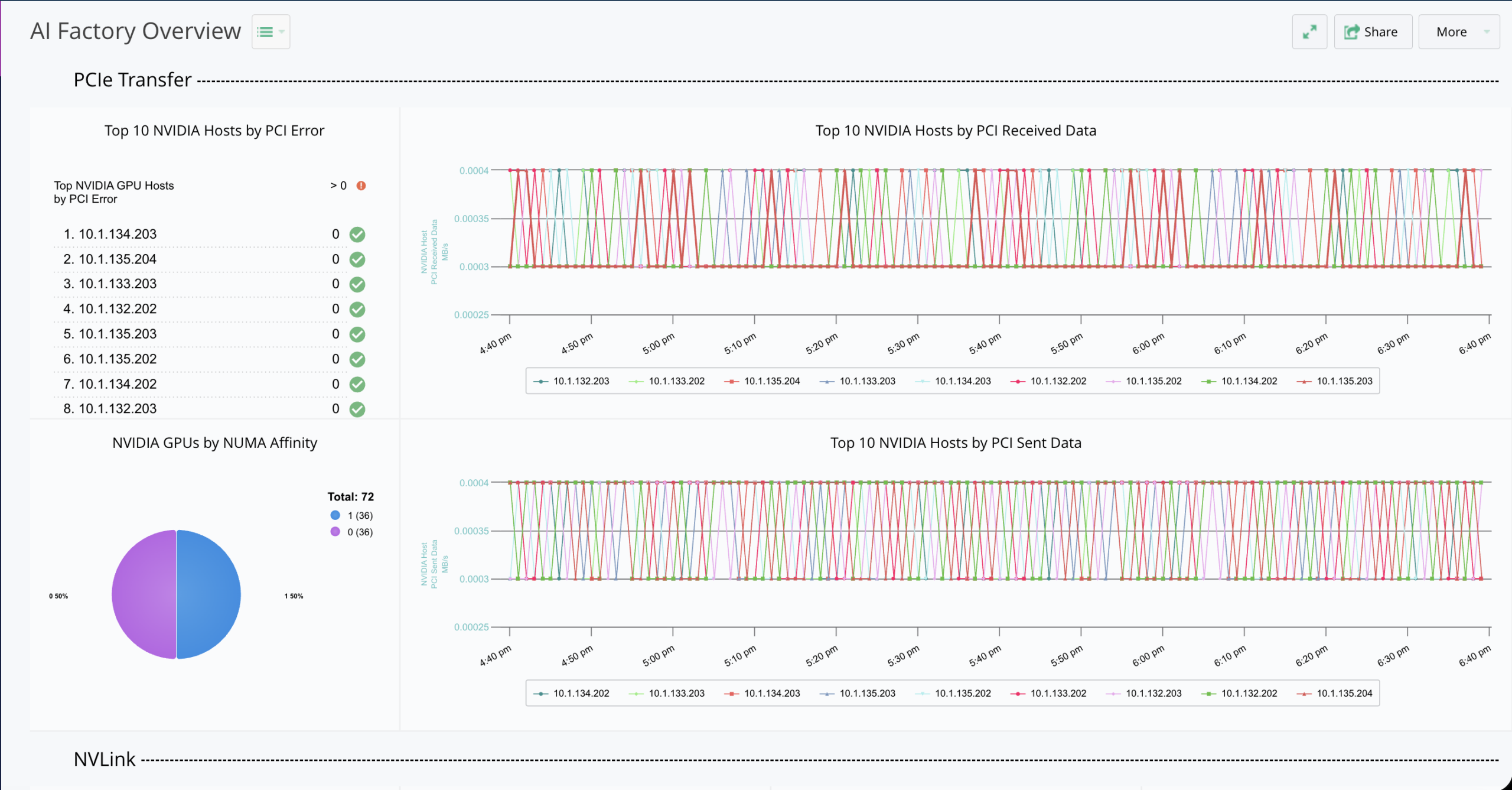

Network & Data Flow Observability

- Analyze NVLink and PCIe throughput.

- Detect network congestion and data bottlenecks.

- Understand how data movement impacts performance across the AI data fabric.

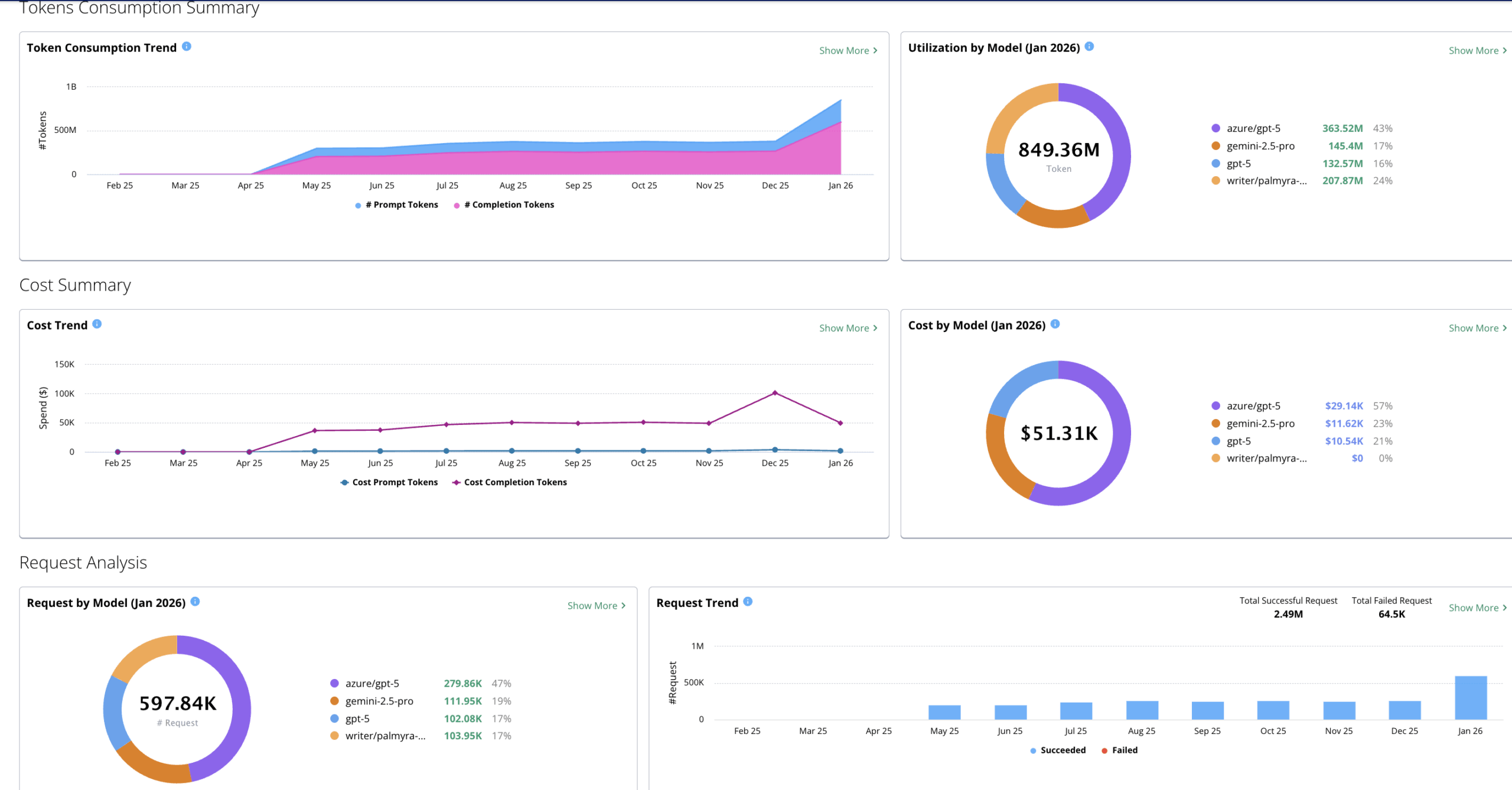

LLM Cost Optimization

Token Economics & Forecasting

- Cloud: Follow dynamic services and dependencies across multi-cloud environments.

- On-premises: Deep visibility from bare metal through virtualization and platforms.

- Hybrid: Correlate signals across distributed infrastructure without visibility gaps using data fabric observability.

- Air-gapped: Built for secure, disconnected environments, including federal and mission-critical deployments.

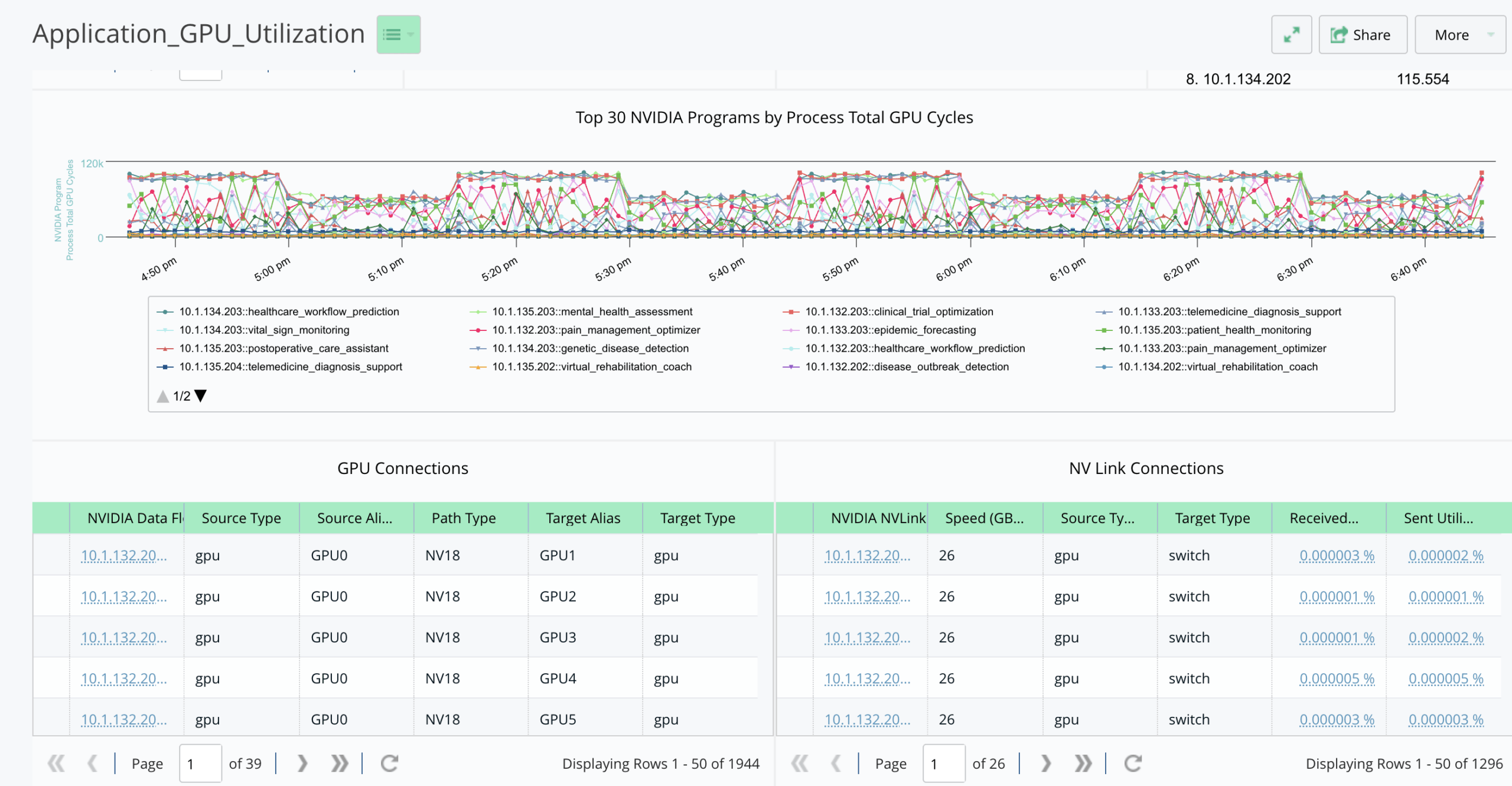

Model Performance Monitoring

Training & Inference Performance

- Measure GPU cycles, memory, and energy per job.

- Compare workload efficiency across environments for training models and inference pipelines.

- Detect performance regressions and instability.

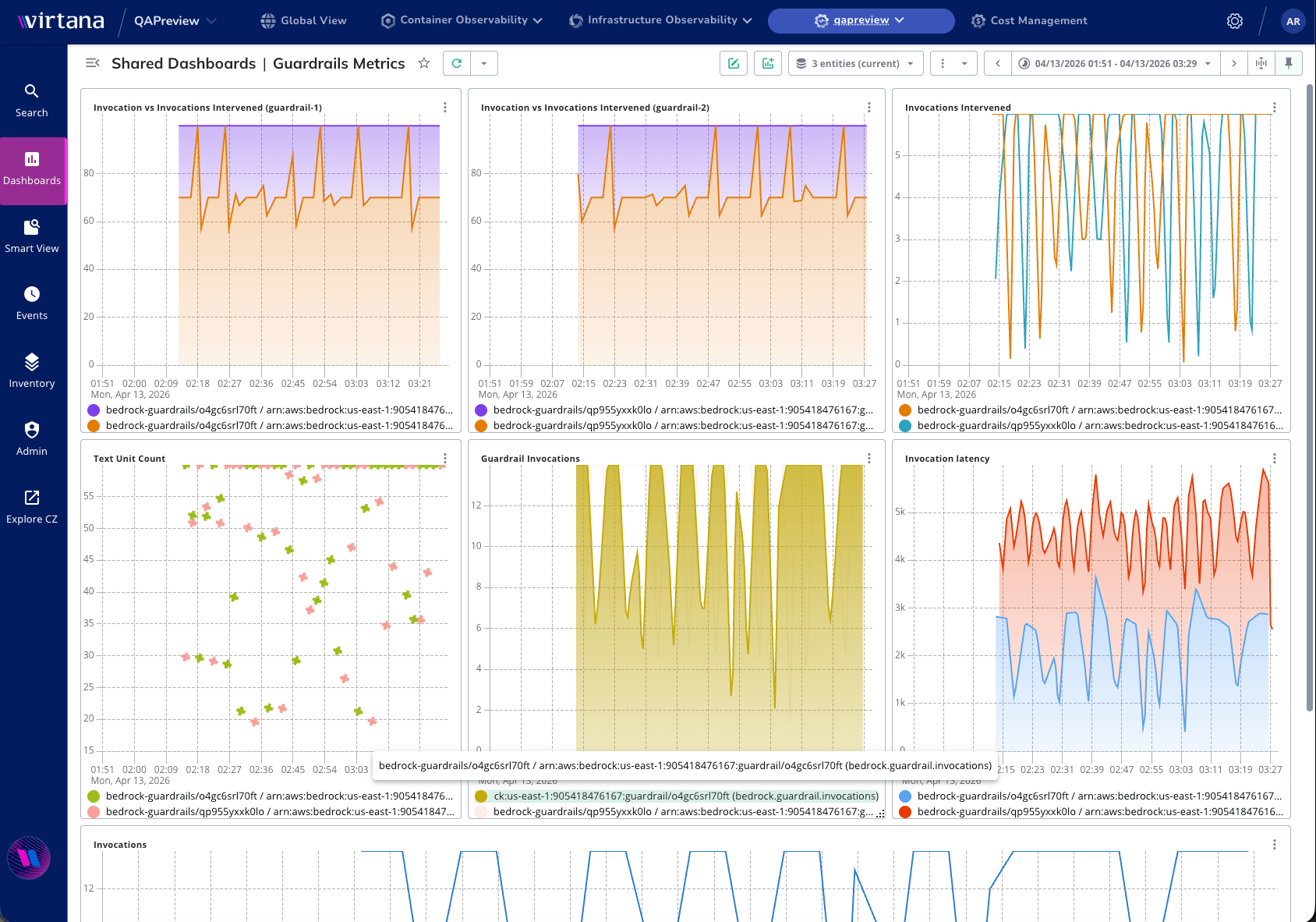

AI Agent Observability Platform

AI Security & Guardrails

- Monitor latency, interventions, and anomalies.

- Track invocation behavior and policy enforcement.

- Ensure safe, compliant AI operations.